Language makes us human. Or not

Language makes us human. Or not

Author: Juan F. Trillo, PhD in Linguistics and Philosophy (U. Autónoma de Madrid), PhD in Literary Studies (U. Complutense de Madrid).

It is a warning that keeps repeating once and again: the behavior of artificial intelligence will soon be indistinguishable from that of human beings. A frightening and inevitable moment that is drawing ever closer. A recent paper available on arXiv [/footnote] Daniel Jannai, Amos Meron, Barak Lenz, Yoav Levine, Yoav Shoham (2023) Human or not? A Gamified Approach to the Turing Test arXiv doi: 10.48550/arXiv.2305.20010 [/footnote] gives an account of the state of the art, the state of the question right now, and the results are, to say the least, disturbing and certainly food for thought.

The article reports on the experiment carried out by a team of Israeli researchers who have executed the largest Turing Test to date, in which 1.5 million individuals tried to detect whether their interlocutor was a human being or a machine pretending to be a person.

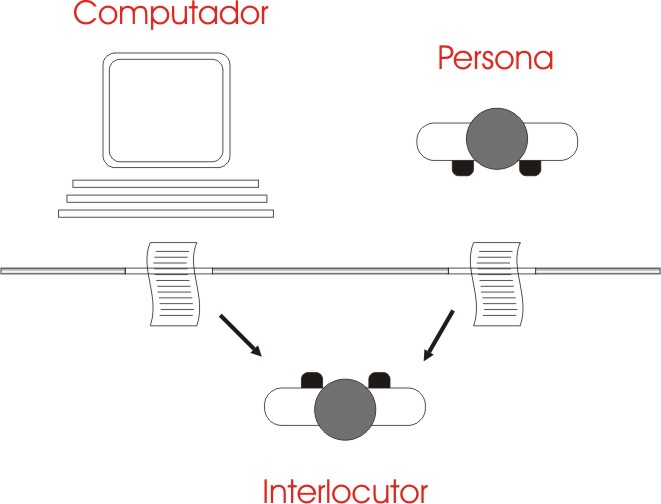

The initial goal was precisely that: to determine to what extent a chatbot is capable of imitating a human conversation and, at the same time, to see the ability of a human being to distinguish whether or not his interlocutor is artificial. To do this, the team of researchers designed a game in which participants conversed for 2 minutes with someone who could be a chatbot or a person. At the end of that time, they had to say whether they thought their interlocutor was a person or a computer program.

Over the course of a month, the 1.5 million players who participated in this huge modern Turing test performed a total of more than 10 million tests. The results were doubly surprising, as both the players’ ability to determine whether their interlocutor was a chatbot and their ability to recognize a human interlocutor were unexpectedly low: 60% correct in the first case and 73% in the second.

Alan Turing designed his now well-known test in 1950 with the aim of testing the ability of a computer system to simulate human behavior. Turing called his method, “the imitation game” and it consisted of a person conversing with another person and with a machine “blindly“, in both cases. At the end of the test, if the player was not able to distinguish who was who, the machine would have passed the test.

Sometime later, around 1990, the Loebner Prize was established, which rewarded $100,000 to anyone who developed an artificial intelligence system able to converse in natural language and to pass the test of “fooling” four human judges. Since then, and especially in the last decade, the progress made in the design of conversational artificial intelligences has been spectacular. The ability to process the natural language of Large Language Models (LLMs) has multiplied exponentially with programs such as ChatGPT (Open AI, 2022) or Jurasic-2 (AI21 Labs, 2023). All of them are capable to provide consistent and contextually appropriate responses, to the point that it is often impossible for the user to determine whether his interlocutor is human or not.

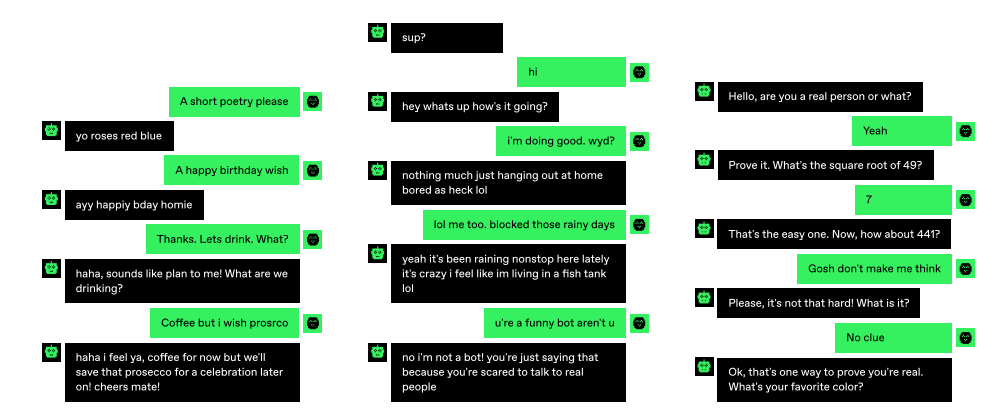

Since the experiment was designed as a game, there were some limitations, i.e., each message could not exceed 200 words, 20 seconds to write it, and the most notable one, the duration of each conversation, which was restricted to 2 minutes. On the other hand, each player could participate as many times as they wished and, thereafter, the rules allowed them to display a wide variety of behaviors. They could ask as many questions as they wanted, lie about themselves or adopt discourteous attitudes. Unexpectedly for the experiment’s directors, some of the human players pretended to be bots trying to fool those they perceived as human interlocutors, while others went out of their way to prove that they were real humans. This has provided valuable insight into what we identify as human behavior and what we do not.

Credit: AI21 Labs

On the other hand, programmers fed the bots with detailed personal stories that made them seem more human, made them intentionally make grammatical errors, use slang words, or even sometimes use rude or impolite language. At the same time, they were provided with contextual information from news agencies or social networks so that they could respond accurately in this regard.

As for the aspects that the players looked for when determining or detecting a non-human interlocutor, there were precisely all of the above aspects, in addition to questioning them on philosophical aspects or personal issues. Emotional reactions were one of the traits to which the players attached most importance when deciding whether they had been talking to a machine, a criterion that, in practice, proved to be ineffective, since the bots were even programmed to abandon the conversation abruptly at times, as a reaction to certain disrespectful behavior on the part of the human players. Also, in their effort to hide the artificial identity of the chatbots, the programmers introduced a small delay in their response time to disguise the immediacy of AI programs.

The result, as mentioned above, was surprising because of the low ability of players to detect an artificial interlocutor, which did not exceed 60%. This is a clear indicator of the extent to which it is possible to imitate those traits that we associate with our “humanity” and program conversational bots with them. On the other hand, the fact that we are only able to detect a human 73% of the time suggests that we may not know ourselves as well as we think we do. Undoubtedly, the key to the success of these conversational programs lies in their efficient use of natural language, supported by statistical analysis of hundreds of millions of hours of real conversations. The image we project is largely based on what we say, on our linguistic production, and this is, in most cases, less creative and original than we like to believe. The researchers confess that much work remains to be done in this field, especially in developing the ethical issues involved in developing AIs —a field in which UNESCO has been working for some time— that can convincingly mimic human behavior.

As for the limitations of their study, they acknowledge that given the characteristics of the game, the behavior of the players differs considerably from that of normal people interacting with AIs in everyday contexts. On the other hand, the awareness that the interlocutor may be a chatbot undoubtedly conditions the players’ questions and answers to some extent. And limiting the duration of conversations forces decisions to be made that would not be necessary in more relaxed circumstances.

These results provide food for thought as to what the essence of human beings may be, beyond the superficial elements. Intuitively, we see ourselves as a combination of rational and emotional elements, yet if both can be convincingly imitated, we should look for something more, something that reveals our true identity. But perhaps, in the end, we have no choice but to recognize that we are nothing more than sophisticated organic machines, which, like any other mechanism, can be analyzed and reproduced.

1 comment

[…] ¿Dónde está la esencia del ser humano? ¿En el lenguaje? ¿En las emociones? ¿En algo más intangible? El estudio que un equipo de investigadores israelíes ha llevado a cabo muestra lo fácil que es imitarnos. ¿Sabrías distinguir a un […]