AI: So far, so good

Author: Juan F. Trillo, PhD in Linguistics and Philosophy (U. Autónoma de Madrid), PhD in Literary Studies (U. Complutense de Madrid).

At a time when the mass media are full of predictions about the apocalyptic effect that AI will have on humanity, it is comforting to read two essays that show that the reality, for the moment, points in the opposite direction. The first of these reveals the excellent results that the application of natural language processors (NPL) is producing in the translation of ancient Akkadian texts, texts that date back 5,000 years and are written in cuneiform, a writing system that is particularly difficult to interpret. The second describes advances in remote surgery, employing AI for operations that until recently were done exclusively by humans. These interventions performed with the help of mechanical means are reaching previously unthinkable levels of precision and safety.

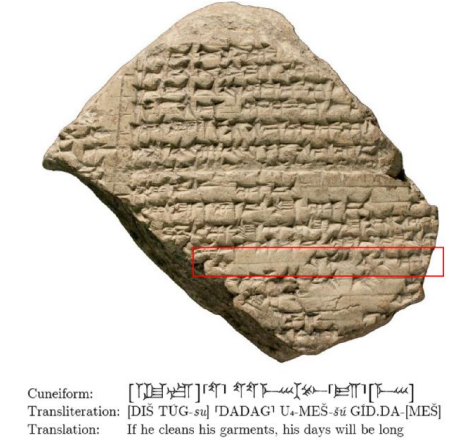

The first of these articles (1), written by a team from the School of Computer Sciences, at Tel Aviv University, Israel, and published in Oxford University Press, reports on the progress made in translating texts written in cuneiform using convolutional neural networks (CNN). The cuneiform representation system constitutes one of the most extraordinary advances in the history of mankind, as it was the first structured writing system and allowed the emergence of the first known civilizations. Developed by the Sumerian culture around 3,500 BC, it was used for more than three millennia to represent languages such as Babylonian, Elamite, Assyrian, Hittite, Sumerian or Akkadian, until it was finally replaced by alphabetic writing, shortly before the beginning of our era. It is, however, an exceptionally complex system of graphic representation, which had clay tablets as a support and was written using a wooden awl or stylus sharpened in the shape of a wedge (in Latin cuneus, from which its name derives). This method, although rudimentary in appearance, has proved to be surprisingly durable and, at present, several hundred thousand texts written using the cuneiform system are available. One wonders how much of what we write today will withstand a similar period of time.

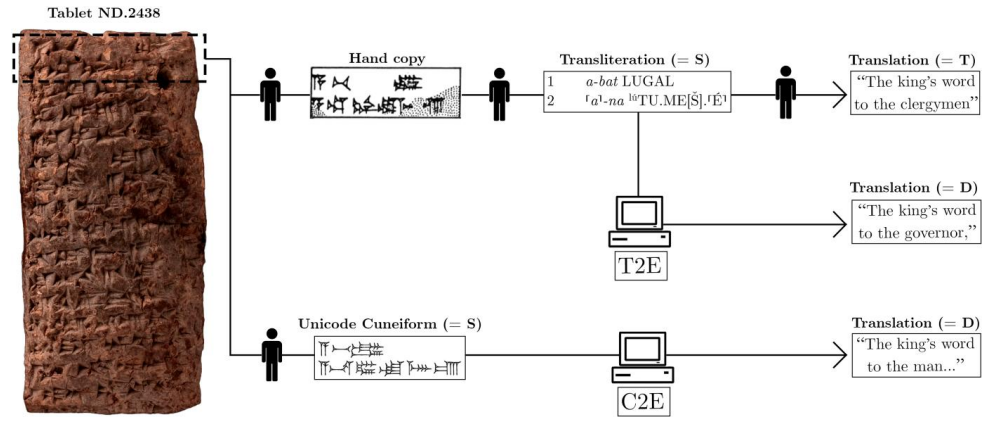

Although the support of digital tools in the translation of written texts has become commonplace, this is the first time that a neural machine translation (NMT) has been employed for the conversion of texts written in Akkadian (ca. 2,700 BCE – 75 CE). This neural model is available online, while the source code can be consulted on GitHub, at Akkademia. The translated texts correspond to the Assyrian and Babylonian languages, both dialects of Akkadian, and come from two different sources. The first one consists of texts written in Unicode cuneiform glyphs, the computational equivalent of the original texts taken from clay tablets, and has been named C2E (Cuneiform to English Task). The second one, on the other hand, takes the transliterations of the cuneiform texts into Latin alphabet, T2E (Transliteration to English Task), which is the representation commonly used in scholarly studies of Akkadian texts. Despite the fact that translation from C2E is considered much more complex than from T2E, the results in the latter case were only slightly higher (37.47) than in the former (36.52), quantifications that take the Bilingual Evaluation Understudy 4 as a reference.

The translation of texts written in cuneiform —whether human or mechanical— usually encounters several practical problems such as the fact that many are incomplete, the absence of context, or the polyvalence of the signs, since each of them can perform different grammatical functions. This often provides several alternative translations of the same text without it being possible to determine, at first, which of them is the correct one. On the other hand, as texts increase in length, the mechanical system becomes, as well, increasingly unreliable, providing incorrect translations or “hallucinations” (translations that make sense but do not correspond to reality). The researchers, however, believe that this problem should be easy to solve, since the cuneiform script is divided into lines with a small number of characters, it would be sufficient to provide the program with the content of each line separately. In the near future, the researchers hope that the NMT translator will also provide a list of the sources on which it has based its translation, thus becoming a valuable aid to the work of scholars of this historical period.

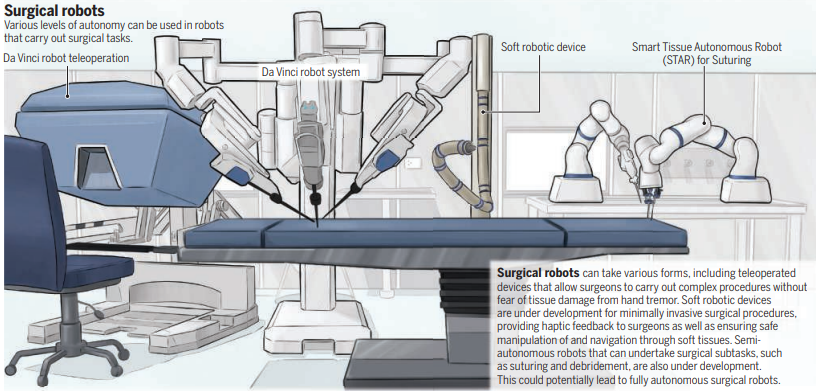

The second of the aforementioned articles (2) reveals the most recent advances in medical robotics. Mechanical assistance in different medical tasks, such as diagnosis, surgery or rehabilitation, is not new; however, the application of artificial intelligence is taking this human-machine collaboration to new levels of efficiency. The advances made in recent years in the areas of image analysis, navigation, precise manipulation and machine learning suggest that the day is not far off when autonomous robots will perform medical tasks in which human intervention is essential today.

Of all these functions, perhaps the most critical is image analysis, as it is crucial to correctly identify lesions or parts of the human anatomy on which to intervene later. Until now, AI support has mainly focused on developing algorithms that allow robotic instruments to navigate through tissue during surgery. In recent years, however, these tasks have been optimized so that mechanical access to damaged areas has become much safer and more precise. Consequently, efforts are now being directed toward improving the robot’s understanding of the image it perceives and its ability to identify what it is seeing, a task that until now has fallen to the human surgeon. The application of this new and improved ability to interpret what the camera perceives will be oriented, in the first instance, towards performing scans and making remote diagnoses, especially in geographic areas where human healthcare is scarce.

However, much of the researchers’ efforts are directed toward achieving a robot that is capable of autonomously performing surgery. To do so, engineers will have to solve problems involving deep learning networks, reinforcement learning and learning by demonstration. And this is where engineers encounter one of their greatest difficulties: as the procedure evolves, the tissue on which they are working changes and the robot must learn to recognize each new aspect of it. Until very recently, this learning required the assistance of expert surgeons and radiologists, which slowed down and made the whole process more expensive.

At present, the number of surgical procedures performed by robots supervised by medical specialists exceeds one million per year. The trend is towards the implementation of fully autonomous surgical robots, i.e. not supervised by human surgeons, in the medium term. Progress in this field is slow, given that mechanically performed surgery has a tolerance for errors that is close to zero. And it is not that human surgeons are fault-free, but rather that it is a question of acceptance by the public, by the patients. Traditionally, there has been a certain mistrust and even rejection of operations performed by autonomous robots, despite the fact that the results in terms of efficiency are very high. For this reason, it is considered that these procedures will still take some time to be implemented. In the meantime, the AI is performing subtasks or minor tasks, such as debridement or the removal of damaged tissue and of non-organic material that could lead to infection. These are tasks performed autonomously by robots, although closely supervised by people, in what has been called “supervised autonomy”. This would be a first step towards remote interventions or “telesurgery”, which would allow medical interventions to be carried out in remote or difficult to access areas (for example, in cases of natural disasters) to which it would be difficult for specialized surgical services to reach. The tests that have been carried out have yielded good results, but for the time being this modality has not been approved for clinical use.

The use of AI in medicine has experienced an exponential increase in recent years due to its excellent results in different medical areas, for example, The Lancet recently reported that the application of artificial intelligence in breast cancer detection has resulted in a 20% increase in the early localization of tumors.

As we said at the beginning, these applications are good examples of how much AI can bring us, both in terms of quality of life and culture. As with nuclear energy, genetic engineering or any other scientific advance, intelligent systems can be used to do good or the opposite. It is up to us to choose how we use our new and growing capabilities, and perhaps now is a good time to remember that with great power comes great responsibility.

References:

- “Translating Akkadian to English with neural machine translation”. Gai Gutherz, Shai Gordin, Luis Sáenz, Omer Levy, Jonathan Berant. DOI: https://doi.org/10.1093/pnasnexus/pgad096

- “Artificial intelligence meets medical robotics”. Michael Yip and Septimiu Salcudean, in Science.org. Medical Robots and AI.pdf

2 comments

[…] Una reseña de dos ensayos científicos que muestran que la Inteligencia Artificial, por el momento, está siendo más beneficiosa que dañina. Para la publicación Mapping Ignorance. […]

Es muy interesante y enriquecedor el material que Udes. envian en general pero los artículos referidos a IA y física de partículas son fundamentales en su articulación con las ciencias sociales. En este reconocimiento de ampliacion de contextos, los procesos referidos a la identidad humana permiten inferir y conocer potencialidades sorprendentes