The complementarity principle

The complementarity principle

Quantum mechanics was founded upon the existence of the wave–particle dualism of light and matter, and the enormous success of quantum mechanics, including the probability interpretation, seems to reinforce the importance of this dualism. But how can a particle be thought of as “really” having wave properties? And how can a wave be thought of as “really” having particle properties? One could build a consistent quantum mechanics upon the idea that a light beam or an electron can be described simultaneously by the incompatible wave and particle concepts.

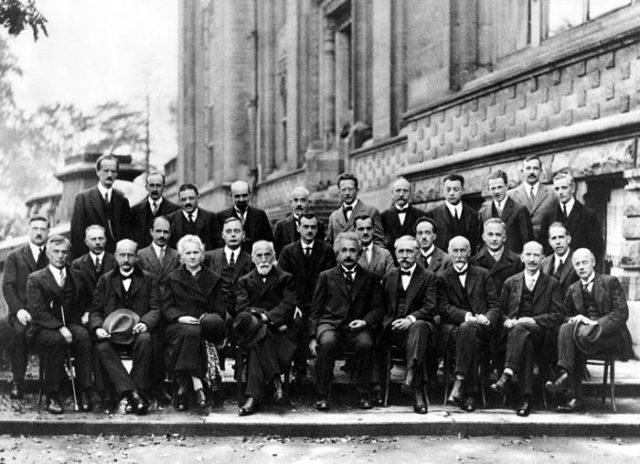

In 1927, Niels Bohr realized that the word “simultaneously” provided the key to a consistent account. He realized that our models, or pictures, of matter and light are based upon their behavior in various experiments in our laboratories. In some experiments, such as the photoelectric effect or the Compton effect, light behaves as if it consists of particles; in other experiments, such as the double-slit experiment, light behaves as if it consists of waves. Similarly, in experiments such as J.J. Thomson’s cathode-ray studies, electrons behave as if they are particles; in other experiments, such as his son’s diffraction studies, electrons behave as if they are waves. But light and electrons never behave simultaneously as if they consist of both particles and waves. In each specific experiment they behave either as particles or as waves, but never as both.

This suggested to Bohr that the particle and wave descriptions of light and of matter are both necessary even though they are logically incompatible with each other. They must be regarded as being “complementary” to each other—that is, like two different sides of the same coin. This led Bohr to formulate what is called the Principle of Complementarity:

The wave and particle models are both required for a complete description of matter and of electromagnetic radiation. Since these two models are mutually exclusive, they cannot be used simultaneously. Each experiment, or the experimenter who designs the experiment, selects one or the other description as the proper description for that experiment.

Bohr showed that this principle is a fundamental consequence of quantum mechanics. He handled the wave–particle duality, not by resolving it in favor of either waves or particles, but by absorbing it into the foundations of quantum physics. Like the Bohr atom, it was another bold initiative toward the formulation of a new theory, even though this required contradictions with classical physics.

It is important to understand what the complementarity principle really means. By accepting the wave–particle duality as a fact of nature, Bohr was saying that light and electrons (or other objects) encompass potentially the properties of both particles and waves—until they are observed, at which point they behave as if they are either one or the other, depending upon the experiment and the experimenter’s choice. This was a profound statement, for it meant that what we observe in our experiments is not the way nature “really is” when we are not observing it. In fact, nature does not favor any specific model when we are not observing it; rather, it is a mixture of the many possibilities that it could be until we finally do observe it! By setting up an experiment, we select the model that nature will exhibit, and we decide how photons and electrons and protons and even baseballs (if they move fast enough) are going to behave—either as particles or as waves.

In other words, according to Bohr, the experimenter becomes part of the experiment! In so doing, the experimenter interacts with nature, so that we can never observe all aspects of nature “as she really is” by herself. In fact, that phrase, while so appealing, has no operational meaning. Instead, we should say we can know only the part of nature that is revealed by our experiments. (This is no invitation to mysticism. After all, we know even about a good friend only through a patchwork of repeated encounters and discussions, in many different circumstances.) The consequence of this fact, for events at the quantum level, said Bohr, is the uncertainty principle, which places a quantitative limitation upon what we can learn about nature in any given interaction; and the consequence of this limitation is that we must accept the probability interpretation of individual quantum processes. For this reason, the uncertainty principle is often also called the principle of indeterminacy. There is no way of getting around these limitations, according to Bohr, as long as quantum mechanics remains a valid theory.

References:

Asimov, I. (1993) New Guide To Science Penguin Press Science

Cassidy, D. et al (2002) Understanding Physics Springer Verlag New York

Author: César Tomé López is a science writer and the editor of Mapping Ignorance.

3 comments

[…] por noesbasura | Feb 11, 2016 | DIPC, Noticias, Physics, Quantum physics, Science | 0 […]

[…] 14- Quoted in: https://mappingignorance.org/2016/02/11/the-complementarity-principle/ […]

[…] Quoted in: https://mappingignorance.org/2016/02/11/the-complementarity-principle […]