Freeing the Language Within: on how babies extract words out of sounds.

Freeing the Language Within: on how babies extract words out of sounds.

Michelangelo di Lodovico Buonarroti Simoni

Michelangelo famously claimed he merely carved out sculptures he saw trapped inside the stone. His genius involved a powerful internal vision that he masterly imposed on external matter. We can think of Michelangelo’s conception of sculpture when we look at human babies acquiring language, because babies, like sculptors, carve out the language they hear in the surrounding sound. Just as the figures Michelangelo saw in the stone originated in Michelangelo’s mind, the languages infants hear in the sound signal also originate in the seeds of language they carry in their minds.

Children can quickly detect a human language out of the acoustic signal, using carving tools that remain with us through life. These carving tools involve powerful statistical abilities, and an exquisite sense of rhythm and prosody. As children grow and establish the main features of language, they progressively tune into the nuances of their native tongue, and together with this specialization they lose their uncanny talent to quickly find any new language in a new sound 1

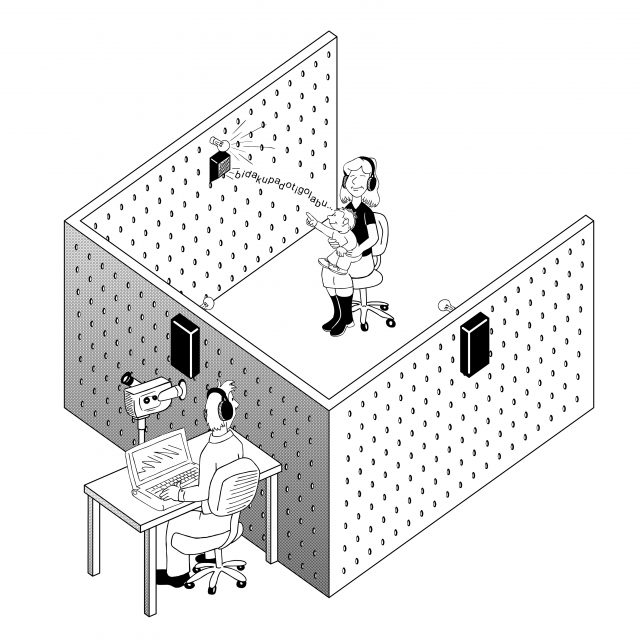

Interpreting as language the sound signal that enters our senses seems so effortless that we mistakenly think there is hardly any brainwork involved in it. But the elements of language (phonemes, words and phrases) are not discrete events in speech, consistently marked by pauses 2. The task of matching the continuous acoustic input with the discrete and combinatorial structure of language is known as segmentation. Segmentation is the first step of language development in infants, and it is a highly complex process, where a myriad of cognitive mechanisms and representations come into play.

Language acquisition was not thought of as a scientific problem until it was brought up by Chomsky, who early on wrote about “the remarkable capacity of the child to generalize, hypothesize and process information in a variety of very special and apparently highly complex ways which we cannot yet describe or begin to understand, and which may be largely innate, or may develop through some sort of learning or maturation of the nervous system”3. Some of these hypothesized mechanisms have been discovered during the last decades, and their study constitutes a thriving frontier in the language sciences.

We now know, for instance, that very young infants compute the statistics of certain aspects of the sound input to discover the structure of language, from syllables to words, from words to phrases, from phrases to grammar. The discovery that babies are capable of rapid and powerful statistical computations on the acoustic input is due to Saffran et al. (1996) 4, who found that 8 month old babies can detect, given only two minutes of exposure, the transitional probabilities of adjacent syllables in an artificial language designed for experimental purposes. Infants use this statistical information to extract chunks of syllables that are more frequent than others, a crucial step that facilitates the identification of words in the continuous speech stream. Given statistical learning, a child exposed to English can determine that, in the four-syllable sequence pre-tty-ba-by, the transitional probability of pre and tty and of ba and by are both higher than those of the syllables tty and ba. Consequently, it can be correctly established that pretty and baby constitute words, whereas ttyba is not likely to be a word.

Infants perform statistical computations over properties of the input that are relevant for language and salient to humans; that is, statistical learning is heavily constrained by organism-internal biases that are largely independent of experience. These experience-independent factors reduce the learning space and make the statistical capacities of infants an efficient tool to impose language structure onto the continuous acoustic stream 5.Statistical learning has been found not to be a domain-specific learning mechanism restricted to language; rather, it is a domain-general learning mechanism that can compute the distribution of domain-specific features in the incoming signal, even in other domains such as music and vision 6.

If humans came only endowed with powerful statistical computation abilities, they would not be able to successfully correlate the acoustic signal with linguistic structure. For instance, in order to compute transitional probabilities among syllables, as we know babies do early on in their development, syllables must first be “found” in the speech signal, a non-trivial task that humans solve easily, probably because the saliency of syllables as a basic unit to detect in speech is innately available, as suggested by speech perception studies in neonates 7. Without these initial anchors, statistical computation cannot reliably segment words when scaled to a realistic setting (i.e. the language input children actually have access to in development).

Later on, babies deploy more language-specific knowledge of the phonological structure of the language they are tuning into, and compute the distributions of phonemes in words and of word types in phrases given their environments, to detect the basic components of linguistic structure, and determine the way in which these are implemented in their surrounding language, for instance to establish phonological repertoires or the rudiments of grammar 8.

Language segmentation, despite its vast complexity, is an easy task for babies, who accomplish it during their first months of life. As adults, we are totally unaware that we continuously segment and process the acoustic input to match it with the discrete units we hold in our minds, and we combine to comprehend and generate linguistic expressions. Thus, regarding the speech sound, we are all a bit like Michelangelo: “In every block of marble I see a statue as plain as though it stood before me, shaped and perfect in attitude and action. I have only to hew away the rough walls that imprison the lovely apparition to reveal it to the other eyes as mine see it.”

References

- Werker, J.F.;Tees, R.C. (1984) Cross-language speech perception: Evidence for perceptual reorganization during the first year of life. Infant Behav. Dev. 7, 49–63; Sebastián-Gallés N. (2006) Native-language sensitivities: evolution in the first year of life. Trends in Cognitive Sciences,10(6), 239-241 ↩

- Cole, R. A., & Jakimik, J. (1980). A model of speech perception. In R.A. Cole (Ed.), Perception and production of fluent speech (pp. 133–163). Hillsdale, USA: Erlbaum. ↩

- Chomsky, N. A review of B. F. Skinner’s Verbal Behavior. Language 35, 26–58(1959), page 43. ↩

- Saffran, J.R., Aslin, R.N., & Newport, E.L. (1996). Statistical learning by 8-month-old infants. Science, 274, 1926–1928. ↩

- Yang C.D. (2004) Universal Grammar, statistics or both? Trends in Cognitive Sciences, 8-10, 451-456 ↩

- Saffran J.R. (2003) Statistical language learning: mechanisms and constraints. Current Directions in Psychological Science, 12- 4, 110-114 ↩

- Bijeljiac-Babic, R. et al. (1993) How do four-day-old infants categorize multisyllabic utterances. Dev. Psychol. 29, 711–721 ↩

- Maye, Werker, & Gerken, 2002 Maye, J., Werker, J.F., & Gerken, L. (2002). Infant sensitivity to distributional information can affect phonetic discrimination. Cognition 82, 101–111; Gomez, R.L., & Gerken, L. (1999). Artificial grammar learning by 1-year-olds leads to specific and abstract knowledge. Cognition 70 109–135. Saffran, J.R., & Wilson, D.P. (2003). From syllables to syntax: Multi-level statistical learning by 12- month-old infants. Infancy 4, 273–284. ↩

4 comments

[…] Image Credits: 1,2,3,4,5,6,7,8,9,10 […]

[…] how infants segment words from the speech stream, see “Freeing the Language Within: on how babies extract words out of sounds”, a previous post on segmentation in this […]

[…] how infants segment words from the speech stream, see “Freeing the Language Within: on how babies extract words out of sounds”, a previous post on segmentation in this […]

I had no idea that babies are able to learn all these various speech and language rules on their own. That is a cool way to know more about how your baby learns. My sister would love knowing this as she looks into a speech pathologist for her child.