What is reason for?

What is reason for?

What does reason consist really in? What it is for? And why are humans, of all the millions of species having populated the earth, the only one that enjoys such a wonderful capacity? The French psychologist Hugo Mercier and anthropologist Dan Sperber (though in both cases the labels are poorly informative of the multifarious work they have been doing) advance in his recent book The Enigma of Reason 1 a provocative theory that tries to answer those and other pressing questions, as, for example, the problem that, if reason is such a helpful cognitive instrument, why is it that we reason so miserably badly so often? For example, how is it that a genius like Isaac Newton could devote so much time and effort to unintelligible reflections on alchemy and other ‘occult sciences’? Or, going to closer and more usual cases, how is it that so many people are so easily convinced of the fake virtues of lots of pseudo-therapies? By the way, if you think that you are immune to those types of ‘mistakes’, a brief look at the literature on cognitive biases should make you think twice (more on this below).

Sperber’s and Mercier’s fictional opponent alongside their book is what they call ‘the intellectualist theory of reasoning’. This view comprises both the claim that there is such thing as ‘Reason’ (i.e., the capacity of producing and recognising sound, valid arguments, or ‘reasons’, that lead us to correct beliefs and appropriate decisions), a capacity we humans have the potentiality to grasp and obey, though, so asserts the other claim, often we do it poorly, owing to the ‘interference’ of other elements of our psychology (emotions, bad memory, inattention, etc.), or just because our capacity of getting reasons right is cognitively non-efficient. The authors complain that this traditional view is deeply incompatible with a Darwinian view of human nature. If reason is a biological adaptation (and what other thing could it be, after all?), then it should perform rather optimally in most of the cases: irrationality should be limited to marginal instances, and there could not exist something like systematic deviations of rational thinking, or they would be very scarce.

Mercier and Sperber suspect that the nature of reason (what it is, and what it is for) has been deeply misunderstood within this intellectualist approach: reason should not be seen as a capacity intrinsically pertaining to the individual mind and directed to the production of optimal beliefs and decisions for the individual, as if the paradigm of ‘reasoning’ would consist in the solitary elucubrations and syllogism-threading of a single mind, like an isolated and impartial scientist painfully discovering the true laws of nature by thinking from her cabinet or performing clever experiments without any communication with other humans. In particular, it is difficult to explain from this perspective why we are so tightly subject to what psychologists call the ‘confirmation bias’ (or, as these authors prefer to say, the ‘my-side bias’):

“ A lot of evidence shows that reasoning has (this type of) bias. Reason rarely questions reasoners’ intuitions, making it very unlikely that it would correct any misguided intuitions they might have. This is pretty much the exact opposite of what you should expect of a mechanism that aims at improving one’s beliefs through solitary ratiocination… A strong, universal bias is unlikely to be a mere bug, a bad thing. Instead, it is more likely to be a useful feature. What goal could the myside bias serve?” (Ch. 11).

So, when we reason it is much more frequent that we just offer ex post justifications of our intuitions or opinions (that often are inspired by our prejudices), than that we try to find arguments that show the possible mistakes we may have committed. We even invent justifications of our attitudes, actions or beliefs, in experiments designed to show that the real causal factors leading us to them are contextual, and possibly unconscious. For example, facing a choice between products that are really the same, people do not have problems finding arguments that explain their choices, in spite of the fact that the experiment shows that people just tend to choose the products place to the right (one classical reference is Nisbett and Wilson, 1977 2).

Instead, this could easily be explained, Mercier and Sperber say, if reason is basically a social capacity, something that has evolved in the context of a species particularly dependent on social interaction and cooperation, and that finds its most natural exercise in all types of social settings, but mainly in the necessity of reaching appropriate (and usually collective) beliefs and decisions through public argumentation, i.e., through dialog and debate. Hence, Mercier and Sperber have christened their view ‘the argumentative theory of reason’, as well as, in this book, ‘the interactionist theory of reason’. An essential difference between both views of reason is that, according to the latter, rather than expecting that we should be well adapted to produce and assess reasonings ‘in the abstract’, or ‘in the disinterested pursuit of truth’, what we should expect is that we are particularly fit to take part in debates, in which we try to persuade others of our points of view, and try to dismantle the points of view of the our fellows (or opponents). We don’t have, so to say, an innate mechanism forcing us to police through abstract, logical reasoning our cognitive intuitions (which, coming out from modular brain mechanisms usually work rather well enough in the specific contexts in which they have evolved to function – by the way, Sperber and Mercier also argue that reason itself has modular nature, but this I prefer to leave it for another time); instead, we are rather good in detecting failures in the arguments that defend the convictions of other people. Evolution has not provided us with a detector of fallacies in our own beliefs, because it was easier for it to discharge this task in the people surrounding us. The most efficient way of forcing us to reason well, hence, is not by solitary thinking, but by experiencing (and also imagining) the rebuttals we get from others, and hence starting to devote some effort to finding out good reasons.

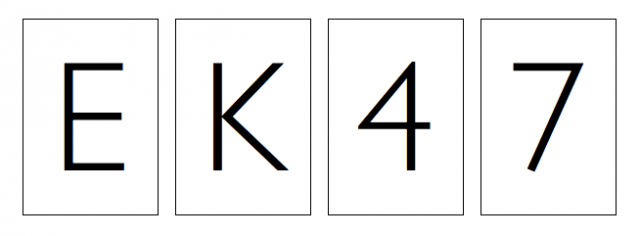

Perhaps the clearest example Mercier and Sperber offer comes from the analysis of the famous ‘Wason selection task’ 3 (in which the experimental subjects are shown four cards with symbols E, K, 4, 7 in the sides that are on view, and have to respond which cards it is necessary to lift to check whether it is true that a card with an E on one side has always a 4 in the other side –it is assumed that all cards have a letter on one side and a number in the other). As it is well known, statistically we are terribly bad in solving this problem, but the most important point now is not that, but the fact that we don’t find difficult, instead, to offer explanations of our choices in the selection task, no matter whether our choice was right or wrong. Furthermore, people’s explanations are systematically bad, and equally bad, both when they are given to justify a wrong choice and when they justify a right choice. However, when the subjects that have to solve the Wason task are not separate individuals, but groups, then the rate of right solutions grow to almost universal success: i.e., it seems we are much more efficient in producing good reasons when we deliberate in public than when we do it in isolation.

Reason, in conclusion, has to be understood, from a biological point of view, as an adaptation to certain types of interaction, in a similar way as the evolution of mammal’s capacity of producing and digesting milk must necessarily be seen as the evolution of a link between individuals, more than as the evolution of an individual trait.

References

- Mercier, H., and D. Sperber, 2017, The enigma of reason. A new theory of human understanding. Harvard, Harvard University Press. ↩

- Nisbett, R. E., and T. Wilson. 1977. “Telling more than we can know”. Psychological Review, 84(1): 231–259. ↩

- Wason, P. C. 1968. “Reasoning about a rule”. Quarterly Journal of Experimental Psychology, 20(3): 273–281. ↩

5 comments

[…] ¿Realmente qué es la razón? ¿Una característica del humano individual, ese ser básicamente irracional? ¿U otra cosa? Jesús Zamora en What is reason for? […]

You say:

“the problem that, if reason is such a helpful cognitive instrument, why is it that we reason so miserably badly so often?”

I understand the interest on understanding the evolution of reason, what I do not understand is that the above particular question poses any paradox as seems to be implied. It is like asking: if strong arms are a good physical trait, why is it that we fail miserably at lifting heavy objects? Is that difficult to appreciate that too much strength, as a too big reasoning capability, is expensive?

I can find no credit for the image. Is it under CC license?

Actually, we do not know. We found it on the Internet and, even though we tried to find the original author we could not find any hint.

[…] What is reason for?, de Jesús Zamora Bonilla […]